1. A growth mindset curriculum to help students build critical skills such as problem solving, risk-taking, opportunity spotting, and design thinking.

2. Enhance the repositioning of the education system towards STEM, Robotics, Artificial Intelligence, and vocational skills to cope with the demands of the fourth Industrial Revolution and job creation.

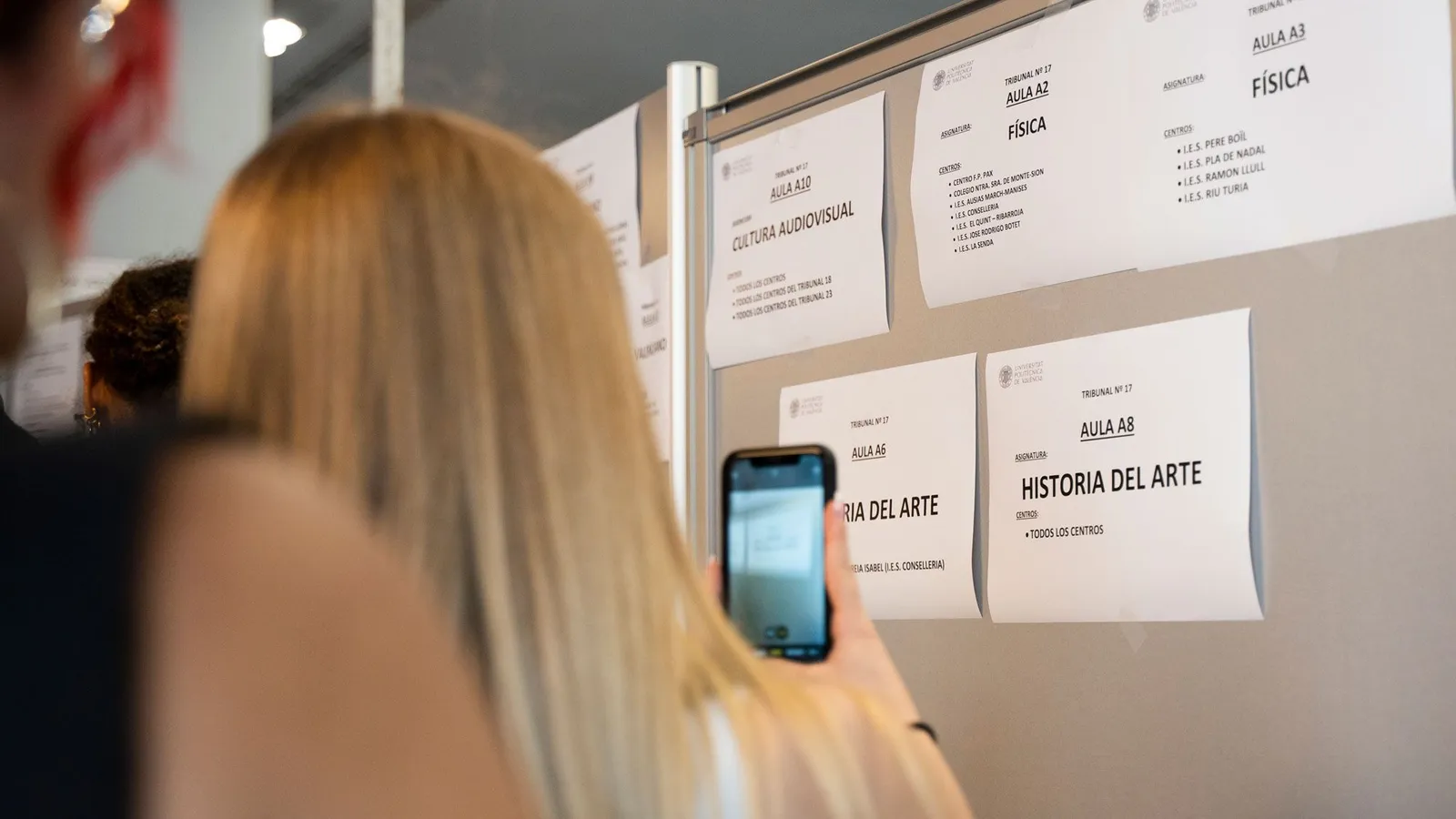

3. Expand infrastructure at medical schools as well as the Ghana Law School to support an increase in admission for students for medical and legal studies.

4. Enhance fiscal discipline through an independent fiscal responsibility council enshrined in the Fiscal Responsibility Act, 2018 (Act 982).

5. Reduce the number of Ministers to 50

6. The Fiscal responsibility Act will also be amended to add a fiscal rule that requires that budgeted expenditure in any year does not exceed 105% of the previous years tax revenue.

7. Reduce the fiscal burden on government by leveraging the private sector.

8. Introduce a very simple, citizen and business friendly flat tax regime. A flat tax of a % of income for individuals and SMEs (which constitute 98% of all businesses in Ghana) with appropriate exemption thresholds set to protect the poor.

9. Tax amnesty

10. Electronic and faceless audits by GRA

11. No taxes on digital payments. The e-levy will therefore be abolished.

12. No VAT on electricity (if still on books)

13. No emissions tax and

14. No betting tax

15. Tema port will be fully automated.

16. A new policy of aligning the duties and charges at Tema port to the duties and charges at Lome Port

17. Spare parts importers duties will be at a flat rate per container (20 or 40 foot).

18. Collaboration with the private sector, we will train at least 1,000,000 youth in IT skills, including software developers to provide job opportunities worldwide.

19. Empower the private sector to create modern, sustainable and well-paying jobs for the youth.

20. Reduce the cost of Data by working with industry players in setting clear policy guidelines that will remove any investor uncertainty and difficulties in business planning.

21. Expeditious allocation of spectrum.

22. Make it easy for Ghanaians to obtain passports, under my government, any Ghanacard holder will only have to pay a fee for a passport.

23. an e-visa policy for all international visitors to Ghana to enable visas to be obtained in minutes subject to security and criminal checks.

24. Attain food security through the application of technology and irrigation to commercial large-scale farming.

25. Promote the use of agricultural lime to reduce the acidity of our soils, enhance soil fertility and get more yield from the application of fertilizers.

26. Prioritize the construction of the Pwalugu Dam by using private sector financing to crowd in grant financing.

27. Adoption of electric vehicles for public transportation.

28. Partner with the private sector to build large housing estates without the government having to borrow or spend.

29. National Rental Assistance scheme (which is working so well) will be enhanced to deal with the problem of demands for rent advance of two years and more.

30. Diversify the generation mix by introducing 2000MW of solar power and additional wind power through independent power producers.

31. More private sector participation in generation and retail.

32. No import duty on solar panels.

33. License all miners doing responsible mining.

34. As long as miners mine within the limits of their licenses (e.g No mining in river or water bodies), there will no longer be any seizure or burning of excavators.

35. Fully decentralize the minerals commission as well as Environmental Protection Agency (EPA) and ensure that they are present in all mining districts.

36. Collaborate with the large mining companies, convert abandoned shafts into community mining schemes.

37. Open more new community mining schemes.

38. District mining committees should be responsible for reclamation and replanting.

39. Pension scheme for small scale miners like we have done for cocoa farmers.

40. Introduce vocational and Skills training on sustainable mining for small scale miners in the curriculum of TVET institutions.

41. Provide equipment to government authorities in mining communities to undertake reclamation of land.

42. We will set up state of the art common user gold processing units in mining districts in collaboration with the private sector.

43. Conduct an audit of all concessions with various licenses and new applications.

44. Abolish the VAT on exploration services (like assaying) to encourage more exploration.

45. Establish, in collaboration with the private sector, a Minerals Development Bank to support the mining industry.

46. Establish (through the private sector) a London Bullion Market Association (LBMA) certified gold refinery in Ghana within four years.

47. All responsibly mined small scale gold produced will be sold to the central bank, PMMC or MIIF and will be required to be refined before export

48. Engage exploration experts from the universities and geological Institutions to assist in exploring our seven gold belts.

49. Provide the Geological Survey Department and our universities with resources annually to undertake a mapping of areas where we have gold reserves.

50. Build Ghana’s gold reserves appreciably to reach a point when we have sufficient gold reserves to keep our external payments position sustainably strong.

51. Protect local industry from smuggled imports that evade import duties.

52. Special Economic Zones ( Free Zones) will also be created in collaboration with the private sector at Ghana’s major border towns such as Aflao, Paga, Elubo, Sankasi and Tatale to enhance economic activity, increase exports, reduce smuggling and create jobs.

53. Individualized credit scoring

54. Digitalization of land titling and transfer

55. Propose to amend Article 87 of the 1992 Constitution as well as the NDPC Act (Act 479) to mandate political party manifestoes, and consequently Economic and Social policies of governments, as well as budgets, to be aligned to the agreed on broad contours in specific sectors.

56. Amend the 1992 Constitution with key emphasis on issues such as reducing the power of the President and empower other institutions, ex gratia, the rights of dual citizens, election of MMDCEs to deepen decentralization, among others with extensive public consultation.

57. Prioritise the creation of incentives for corporate sponsorship as a sustainable module of financing sports development and promotion for our national teams.

58. Establish the Ghana School Sports Secretariat, which will be an agency under the ministry responsible for sports, in collaboration with other stakeholders such as the GES and sports federations.

59. Leverage technology, data and systems to improve healthcare.

60. Expand infrastructure at medical schools and improve human capital development.

61. Introduce digital and streaming platforms for our artists to make tourism and the creative arts a growth pole in Ghana.

62. Tax incentives will also be provided for film producers and musicians.

63. Implement a visa-on-arrival policy for all international visitors to Ghana as has recently been implemented by Kenya.

64. Recruit 1,000 special education teachers and retrain teachers on how to work with special needs students.

65. Train more speech and language therapists and occupational and behavioural therapists.

66. Fiscal and administrative decentralization

67. Empower the private sector to build roads, hospitals, and schools.

68. Prioritize the full implementation of the Affirmative Action Act as should hopefully have been passed by January 2025.

69. After completion of their education, those that can secure jobs would be exempted from national service. National service will no longer be mandatory.

70. Seek school-level collaboration with international sports bodies like the NBA and NFL to make Ghana a hub for these emerging sports in Africa, to create more opportunities for young people. Collaboration with the private sector, we will train at least 1,000,000 youth in IT skills, including software developers to provide job opportunities worldwide.

:no_upscale():format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24506252/Google_Workspace_Docs.gif)

:no_upscale():format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24506412/Gmail_Mobile_16x9_030823.gif)