A recent report reveals that a network of 171 bot accounts on X (formerly Twitter) has been using ChatGPT-generated content to spread disinformation ahead of Ghana’s 2024 presidential elections.

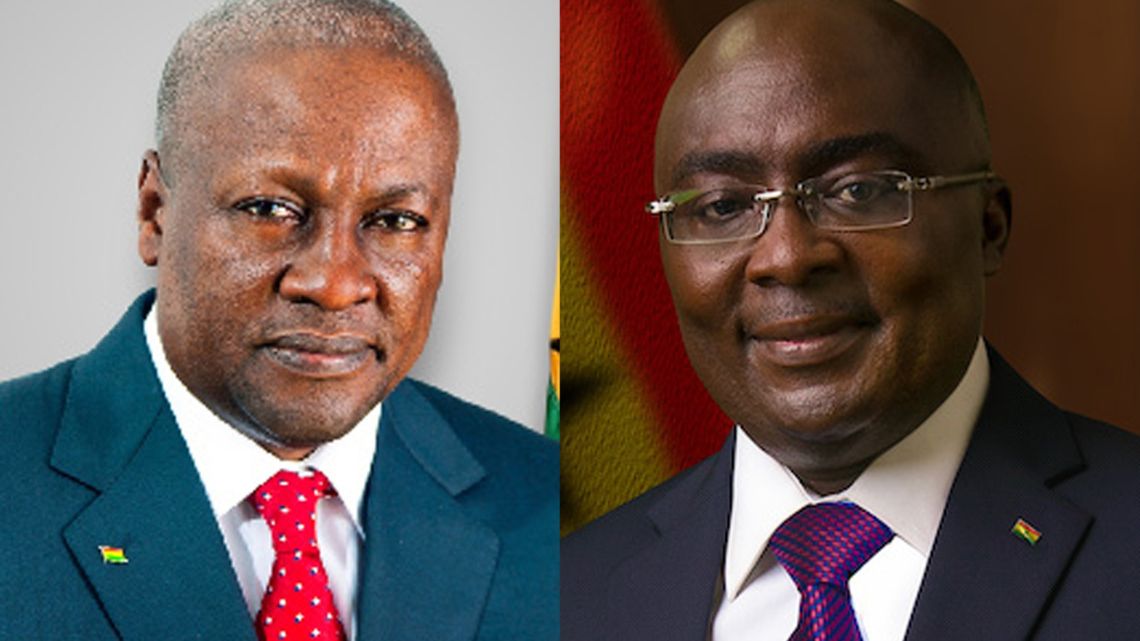

The accounts, which have been active since February, consistently promote the ruling New Patriotic Party (NPP) and its presidential candidate, Mahamudu Bawumia, while disparaging the opposition National Democratic Congress (NDC) and its candidate, John Mahama, according to NewsGuard.

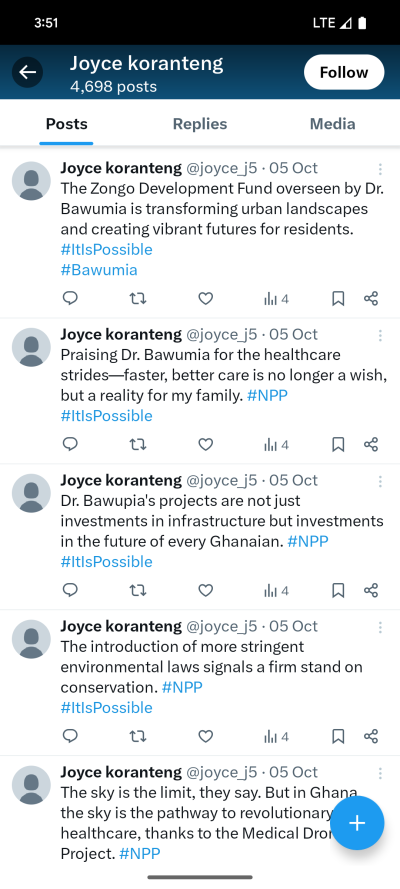

The bots employ popular hashtags such as #Bawumia2024, #ItIsPossible, and #NPP, and often push right-wing talking points. They also engage in negative campaigning against Mahama, with posts falsely accusing him of being a drunkard, a claim he has denied. The content produced by the bot accounts, which include AI-generated profile photos and names like “Glenn Washington” and “Patriot,” is posted at predictable intervals and is designed to amplify pro-NPP messaging.

The findings were part of a study by NewsGuard, a website that tracks misinformation. Researchers used AI tools to analyze the posts, concluding that the content was highly likely to have been generated by ChatGPT. The bots’ activities reflect the growing use of AI by political influence networks, particularly during vulnerable political periods like elections.

The research also highlights the role of X’s diminished content moderation efforts under Elon Musk’s leadership, which has made it easier for such disinformation campaigns to proliferate. Despite the findings, only two of the accounts have been suspended so far, with little immediate response from X or OpenAI, the creators of ChatGPT.

NewsGuard’s report marks this as one of the first instances of an AI-driven, partisan bot network designed to influence elections in Ghana, though the full impact of these accounts on the election’s discourse remains unclear.

:no_upscale():format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24506252/Google_Workspace_Docs.gif)

:no_upscale():format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24506412/Gmail_Mobile_16x9_030823.gif)